Networking Concepts

A quick guide to Networking for Developers

Outdoorsman | Networking | SRE | Chaos & Platform Engineering | DevOps @toyotaracing | former @aws @splunk @verizon @gm | Thoughts are my own

Personal: Husband | GirldadX3 | BBQ | Outdoors | Nascar | Baseball | Triathlons

Introduction

Networking is a critical aspect of platform engineering, and it is essential to have a good understanding of its concepts. I busted my chops in networking and got my degree in network engineering. I have been pulling cable since I was eleven years old and LOVE talking about networking/diving deep to the packet level. I plan on writing an advanced guide to troubleshooting packet captures sometime in the future, but for now, I'll go over the basics. In this Blog, we will cover both basic and advanced concepts of networking, finishing with cloud-native networking concepts.

Basic Concepts

What is Networking?

Networking is a fundamental aspect of modern computing, which connects computers, servers, and other devices to each other, enabling them to share data and resources. At its core, networking is about creating a communication pathway between devices, allowing them to exchange information. In order to achieve this goal, networking relies on a set of protocols, standards, and technologies. The OSI model is a conceptual framework used to describe the different layers of networking, from the physical layer to the application layer. Understanding the OSI model is crucial for anyone who wants to learn about networking, as it provides a foundation for understanding how data is transferred between devices on a network.

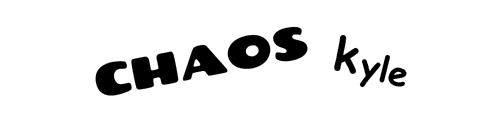

OSI Model

The OSI (Open Systems Interconnection) model is a conceptual framework used to describe the different layers of networking, from the physical layer to the application layer. Here is an infographic with some helpful animal acronyms to remember:

Developed by the International Organization for Standardization (ISO) in 1984, the OSI model serves as a common reference for understanding and designing communication protocols. The model breaks down communication processes into smaller components, allowing for improved interoperability, modular design, and easier troubleshooting. Each layer of the model represents a specific set of functions and communicates with the layers above and below it.

LAN and WAN Networks

Local Area Networks (LAN) and Wide Area Networks (WAN) are two types of computer networks that differ in terms of their size, geographical scope, and complexity.

A LAN is a computer network that spans a small geographic area, such as an office, school, or home. The primary purpose of a LAN is to allow computers and devices within the network to share resources, such as printers, files, and applications. LANs are typically made up of a few hundred devices, and they are relatively easy to set up and manage. Ethernet is the most common LAN technology, and it uses a wired connection to connect devices.

WAN, on the other hand, is a computer network that spans a large geographic area, such as a city, country, or even the world. WANs are designed to connect LANs that are located in different locations and allow them to share resources and communicate with each other. WANs are much more complex than LANs and require specialized hardware and software to run. The Internet is an example of a WAN, which connects millions of devices across the world.

The key difference between LAN and WAN is their size, scope, and complexity. LANs are small, simple networks that are easy to set up and manage, while WANs are much larger, more complex networks that require specialized hardware and software to run. Another significant difference is the speed of the network. LANs are typically faster than WANs because they have a smaller geographical area to cover. Finally, LANs are generally more secure than WANs because they are easier to control and monitor.

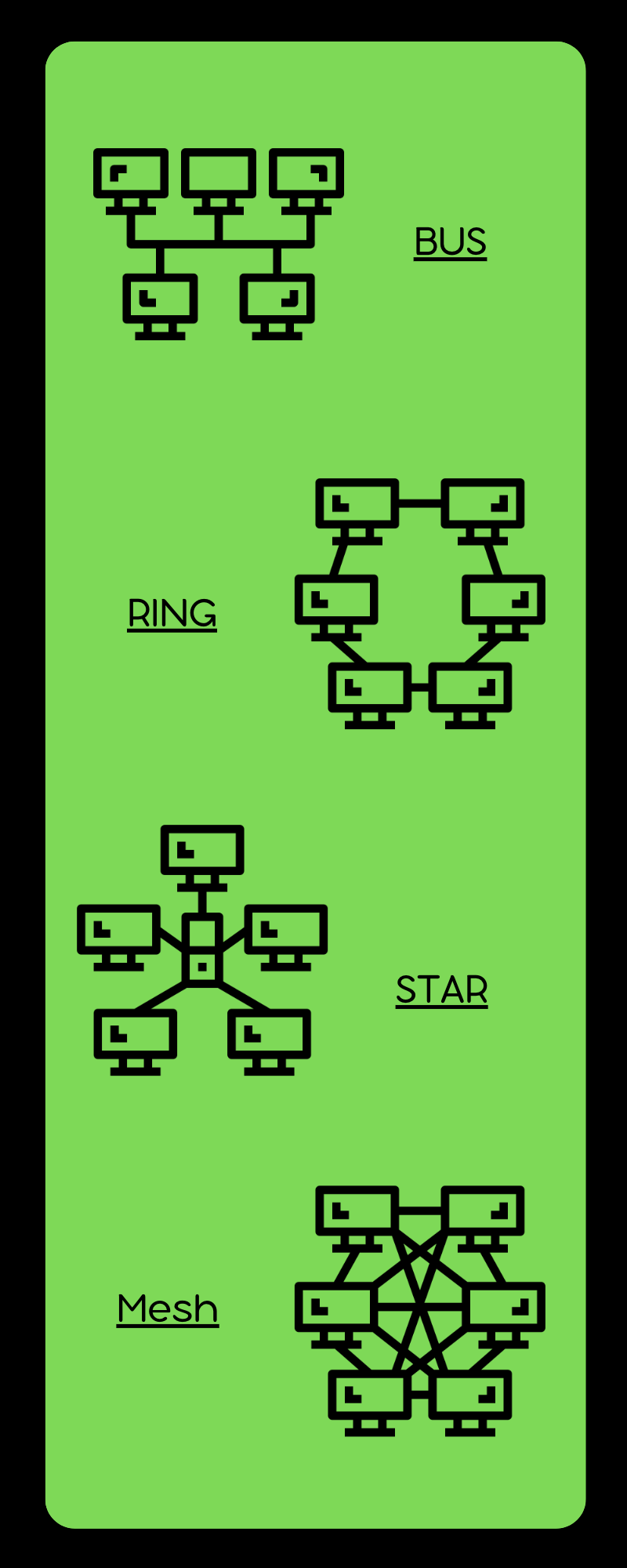

Network Topologies

Network topology refers to the physical or logical layout of a network. There are several different types of network topologies, each with its own advantages and disadvantages.

Bus topology is a type of network topology where all devices are connected to a single cable, called the bus. This topology was commonly used in older Ethernet networks. While it is easy to set up, it can be difficult to troubleshoot as a single fault in the cable can bring down the entire network.

Ring topology, on the other hand, connects all devices in a closed loop, where each device is connected to two other devices. This topology is often used in Token Ring networks. It is more reliable than bus topology, as it is more fault-tolerant, but it can suffer from slow data transfer speeds.

Star topology is a network topology where each device connects to a central hub or switch. This is one of the most common network topologies used in LANs today. It is easy to add or remove devices from the network, and it is also easier to troubleshoot in case of a fault. However, this topology can be more expensive to set up than bus or ring topologies.

Mesh topology is a network topology where each device is connected to every other device in the network. This is the most fault-tolerant topology, as it can handle multiple failures without bringing down the entire network. However, it can be expensive to set up and difficult to manage as the number of devices increases.

These are just a few examples of network topologies, and each has its own advantages and disadvantages. The choice of topology depends on factors such as the size of the network, the number of devices, and the desired level of fault tolerance.

Network Devices

Network devices are hardware or software components that are used to connect devices within a network. They are responsible for ensuring that data is transmitted efficiently and securely between devices. Some common network devices include:

Routers: A router is a device that connects two or more networks and routes data packets between them. Routers use routing tables to determine the best path for data to travel between networks.

Switches: A switch is a device that connects multiple devices within a network and allows them to communicate with each other. Switches use MAC addresses to determine where to send data packets within a network.

Firewalls: A firewall is a device that is used to control access to a network and protect it from unauthorized access. Firewalls can be hardware or software-based and can be configured to filter traffic based on a set of rules.

Load Balancers: A load balancer is a device that distributes network traffic across multiple servers or devices, ensuring that no single device is overwhelmed with traffic.

Access Points: An access point is a device that allows wireless devices to connect to a wired network. Access points use Wi-Fi to transmit data between devices.

Modems: A modem is a device that connects a computer or network to the internet. Modems use a variety of technologies, including DSL, cable, and fiber to provide internet connectivity.

Each network device has its own specific function within a network, and they all work together to ensure that data is transmitted efficiently and securely. Understanding the role of each network device is crucial for designing and maintaining complex network infrastructures.

Network Protocols

TCP/IP

TCP/IP is a set of protocols used to connect devices on the internet. It stands for Transmission Control Protocol/Internet Protocol and is responsible for ensuring that data is transmitted correctly between devices. TCP is responsible for breaking data into packets, ensuring that each packet is received correctly, and reassembling the packets into the original data. IP is responsible for addressing and routing data between devices.

IP Address and Subnet Mask

IP Address

An IP (Internet Protocol) address is a unique numerical identifier assigned to devices participating in a computer network using the Internet Protocol for communication. IP addresses serve two main functions: identifying the host or network interface and providing the location of the host in the network.

There are two versions of IP addresses in use:

IPv4: IPv4 (Internet Protocol version 4) is the most widely used version of the Internet Protocol. It uses 32-bit addresses, which are typically represented as four decimal numbers separated by periods (e.g., 192.168.1.1).

IPv6: IPv6 (Internet Protocol version 6) is the successor to IPv4, designed to address the exhaustion of IPv4 address space. It uses 128-bit addresses, which are represented as eight groups of hexadecimal numbers separated by colons (e.g., 2001:0db8:85a3:0000:0000:8a2e:0370:7334).

Subnet Mask

A subnet mask is a 32-bit number (for IPv4) or a 128-bit number (for IPv6) that is used to divide an IP address into two parts: the network portion and the host portion. The subnet mask helps routers determine the destination of a packet within a subnet or route it to another network if the destination is outside the local network.

In an IPv4 subnet mask, the network portion of the address consists of consecutive binary 1s, followed by consecutive binary 0s for the host portion. For example, a common subnet mask is 255.255.255.0, which corresponds to a binary representation of 11111111.11111111.11111111.00000000. This indicates that the first three octets (24 bits) of the IP address represent the network portion, and the last octet (8 bits) represents the host portion.

In IPv6, the subnet mask is often represented as a prefix length, indicating the number of consecutive 1 bits in the subnet mask. For example, a /64 prefix length corresponds to a subnet mask of 11111111.11111111.11111111.11111111.11111111.11111111.11111111.11111111.00000000.00000000.00000000.00000000.00000000.00000000.00000000.00000000.

Subnet masks play a crucial role in IP networking, enabling efficient allocation of IP addresses and facilitating routing of data packets between networks.

Subnet Mask Cheat Sheet

Here's a cheat sheet for subnet masks (CIDR notation) and their corresponding IPv4 address ranges:

| CIDR | Subnet Mask | Number of IP Addresses | Address Range |

| /32 | 255.255.255.255 | 1 | 1 |

| /31 | 255.255.255.254 | 2 | 2 |

| /30 | 255.255.255.252 | 4 | 4 |

| /29 | 255.255.255.248 | 8 | 8 |

| /28 | 255.255.255.240 | 16 | 16 |

| /27 | 255.255.255.224 | 32 | 32 |

| /26 | 255.255.255.192 | 64 | 64 |

| /25 | 255.255.255.128 | 128 | 128 |

| /24 | 255.255.255.0 | 256 | 256 |

| /23 | 255.255.254.0 | 512 | 512 |

| /22 | 255.255.252.0 | 1,024 | 1,024 |

| /21 | 255.255.248.0 | 2,048 | 2,048 |

| /20 | 255.255.240.0 | 4,096 | 4,096 |

| /19 | 255.255.224.0 | 8,192 | 8,192 |

| /18 | 255.255.192.0 | 16,384 | 16,384 |

| /17 | 255.255.128.0 | 32,768 | 32,768 |

| /16 | 255.255.0.0 | 65,536 | 65,536 |

| /15 | 255.254.0.0 | 131,072 | 131,072 |

| /14 | 255.252.0.0 | 262,144 | 262,144 |

| /13 | 255.248.0.0 | 524,288 | 524,288 |

| /12 | 255.240.0.0 | 1,048,576 | 1,048,576 |

| /11 | 255.224.0.0 | 2,097,152 | 2,097,152 |

| /10 | 255.192.0.0 | 4,194,304 | 4,194,304 |

| /9 | 255.128.0.0 | 8,388,608 | 8,388,608 |

| /8 | 255.0.0.0 | 16,777,216 | 16,777,216 |

Keep in mind that the number of usable IP addresses will be slightly less than the total number of IP addresses in each subnet, as the first and last addresses are typically reserved for the network address and broadcast address, respectively.

DNS

DNS stands for Domain Name System and is responsible for translating domain names into IP addresses. When you type a URL into your browser, DNS is responsible for finding the IP address associated with that domain name so that your browser can connect to the correct web server.

DHCP

DHCP stands for Dynamic Host Configuration Protocol and is responsible for assigning IP addresses to devices on a network. DHCP allows devices to join the network and automatically receive an IP address, making it easier to manage large networks with many devices.

HTTP

HTTP stands for Hypertext Transfer Protocol and is responsible for transferring data between web servers and web browsers. It is the protocol used for accessing web pages on the internet.

ARP

ARP stands for Address Resolution Protocol and is responsible for translating IP addresses into MAC addresses. When devices communicate with each other on a network, they use MAC addresses to identify each other. ARP is responsible for finding the MAC address associated with a given IP address.

MAC address

A MAC address is a unique identifier assigned to each network interface card (NIC) on a device. MAC addresses are used to identify devices on a network and are essential for communication between devices.

VLAN

A VLAN is a virtual LAN that allows multiple devices to be grouped together as if they were on the same physical LAN. VLANs are often used in large networks to segment devices based on their function or security level.

Spanning Tree Protocol

The Spanning Tree Protocol (STP) is a protocol used to prevent loops in a network. Loops can occur when there are multiple paths between devices, and STP is responsible for identifying and disabling redundant paths to prevent data from being sent in a loop.

Routing

Routing is the process of directing data between devices on a network. Routing algorithms are used to determine the best path for data to travel between devices based on factors such as network topology and traffic congestion.

Switching

Switching is the process of forwarding data between devices on a network. Switches use MAC addresses to determine where to send data packets within a network.

OSPF

OSPF stands for Open Shortest Path First and is a routing protocol used in large networks. It is designed to determine the best path for data to travel between devices and can adapt to changes in network topology.

BGP

BGP stands for Border Gateway Protocol and is used to route data between different autonomous systems (AS) on the internet. It is responsible for routing data between internet service providers (ISPs) and is essential for the functioning of the internet.

EIGRP

EIGRP stands for Enhanced Interior Gateway Routing Protocol and is a routing protocol used in large networks. It is designed to determine the best path for data to travel between devices and can adapt to changes in network topology.

eBPF

eBPF (extended Berkeley Packet Filter) is a technology that allows for the dynamic execution of code within the Linux kernel. It provides a way to instrument and modify the behavior of the kernel at runtime, allowing for powerful and flexible networking applications. eBPF is increasingly being used in the area of cloud-native networking, particularly in the realm of service mesh and container networking. It enables developers to gain visibility into the network and application behavior, and to implement advanced networking features such as load balancing and traffic shaping.

Advanced Networking Concepts

Network Virtualization: Basic and Advanced Concepts

Introduction to Network Virtualization

Network virtualization is a technology that allows multiple virtual networks to coexist on a single physical infrastructure. It enables the abstraction of network resources, allowing administrators to manage and provision resources more efficiently. Network virtualization provides benefits such as simplified management, reduced costs, enhanced security, and improved flexibility. By decoupling the underlying physical hardware from the logical network, administrators can adapt to changing business requirements more easily.

Basic Concepts of Network Virtualization

Virtual Networks

A virtual network is a logically isolated network that operates on shared physical network infrastructure. Virtual networks can be used to segment traffic for different departments, applications, or tenants while maintaining complete separation and security. Each virtual network behaves as an independent entity, with its own address space, policies, and management tools.

Overlay and Underlay Networks

In network virtualization, the underlying physical infrastructure is referred to as the underlay network, while the virtual networks created on top of it are called overlay networks. The underlay network provides the foundation for the connectivity and transport of data, while the overlay networks are responsible for providing logical separation and customized services for each tenant or application.

Advanced Concepts of Network Virtualization

Software-Defined Networking (SDN)

Software-Defined Networking (SDN) is a key enabler of network virtualization. SDN decouples the control plane, which makes decisions about how data packets should be forwarded, from the data plane, which is responsible for the actual forwarding of packets. By centralizing control, SDN allows for greater programmability, automation, and flexibility in managing network resources, leading to more efficient virtual network implementation and management.

Network Function Virtualization (NFV)

Network Function Virtualization (NFV) is another important concept related to network virtualization. NFV aims to replace traditional, specialized network hardware with software-based solutions running on standard servers, switches, and storage devices. This approach allows for the virtualization of network functions, such as firewalls, routers, and load balancers, resulting in cost savings, increased agility, and simplified deployment and management.

Network Slicing

Network slicing is a concept that involves creating multiple, isolated end-to-end virtual networks on a single physical network infrastructure. Each network slice can support specific requirements, such as latency, bandwidth, and security, tailored to the needs of different applications or tenants. Network slicing is particularly relevant for 5G networks, as it enables service providers to deliver customized network services for diverse use cases, such as IoT, augmented reality, and autonomous vehicles.

Summing it up

Network virtualization is an essential technology that allows organizations to create, manage, and deploy virtual networks on shared physical infrastructure. By leveraging concepts like Software-Defined Networking, Network Function Virtualization, and network slicing, network virtualization offers numerous benefits, such as simplified management, cost savings, enhanced security, and improved flexibility. As networks continue to evolve, network virtualization will play a critical role in addressing the increasing demand for agile

Network Security

Identity and Access Management (IAM)

Identity and Access Management (IAM) is a comprehensive framework that helps organizations manage, control, and secure access to their digital resources. IAM ensures that the right users have the appropriate access to the correct resources, at the right time, and for the right reasons. It involves various processes and technologies to authenticate, authorize, and audit user access to systems, applications, and data.

Key components of IAM include:

Identity Management: This involves the creation, maintenance, and termination of user accounts and their associated attributes, such as usernames, email addresses, and roles. It ensures that each user has a unique digital identity within the organization.

Authentication: Authentication is the process of verifying the identity of a user, device, or system attempting to access a network resource. Common authentication methods include username/password combinations, digital certificates, and multi-factor authentication (MFA).

Authorization: Authorization determines the level of access granted to an authenticated user or device. This involves assigning permissions and privileges to the user or device based on their role, group membership, or other criteria. Access control lists (ACLs), role-based access control (RBAC), and attribute-based access control (ABAC) are common methods for implementing authorization.

Access Management: Access management involves the enforcement of the organization's access policies, ensuring that users and devices can only access the resources they are authorized to use. This includes the implementation of single sign-on (SSO), which allows users to access multiple applications and services with a single set of credentials.

Audit and Compliance: IAM systems should maintain logs and records of user activities, such as login attempts, changes to user roles, and resource access. These records can be used for auditing and compliance purposes, ensuring that the organization's security policies are being followed and helping to identify potential security risks or breaches.

IAM helps organizations maintain security, improve productivity, and comply with regulatory requirements by providing a centralized, efficient way to manage and control access to their digital resources.

Encryption

Encryption is the process of converting data into a scrambled, unreadable format to protect it from unauthorized access. In the context of network security, encryption is used to secure data transmitted over networks, ensuring privacy and integrity. Common encryption protocols and standards include Secure Sockets Layer (SSL), Transport Layer Security (TLS), and Internet Protocol Security (IPSec).

Load Balancing

Load balancing is the process of distributing network traffic across multiple servers to ensure that no single server is overwhelmed with too much traffic. This improves the overall performance, reliability, and availability of network resources. Load balancing can be implemented using hardware, software, or a combination of both. Common load balancing methods include round-robin, least connections, and server response time.

High Availability

High availability refers to the design and implementation of systems and networks that can continue to operate with minimal downtime or disruption in the event of a failure. This is achieved by introducing redundancy, fault tolerance, and failover mechanisms into the network infrastructure. Techniques for achieving high availability include clustering, replication, and the use of redundant components such as power supplies and network links.

Network Monitoring and Troubleshooting

Network monitoring involves the continuous observation and measurement of a network's performance, health, and security. It helps network administrators identify and resolve issues before they impact users or services. Network monitoring tools can track various parameters, such as bandwidth usage, latency, packet loss, and device status.

Troubleshooting is the process of identifying and resolving problems in a network. This involves systematic investigation and analysis of issues, often using specialized tools and techniques. Effective troubleshooting requires a deep understanding of network protocols, architectures, and equipment, as well as strong problem-solving skills.

Cloud-Native Networking Concepts

Microservices

Microservices is an architectural pattern that breaks down large, monolithic applications into a collection of small, loosely coupled, and independently deployable services. Each microservice is responsible for a specific function or feature and communicates with other services through APIs. This approach offers benefits like improved scalability, flexibility, and easier maintenance.

Service Mesh

A service mesh is a dedicated infrastructure layer for facilitating service-to-service communication in a microservices architecture. It provides capabilities like load balancing, traffic management, security, and observability for inter-service communication. Some popular service mesh implementations are:

Istio: An open-source service mesh that provides traffic management, security, and observability features. It is platform-agnostic and can be used with various container orchestration platforms like Kubernetes.

Linkerd: Another open-source service mesh that focuses on simplicity, security, and performance. Linkerd is designed to be lightweight and easy to integrate with existing applications.

Container Networking

Container networking is the process of connecting and managing network communications between containers in a containerized environment. It provides the necessary infrastructure for container-to-container and container-to-external communication. One prominent example of container networking is:

- Kubernetes Networking: Kubernetes is a popular container orchestration platform that provides various networking constructs, such as pods, services, and ingress controllers, to manage network communication between containers and external systems.

Hybrid Networking

Hybrid networking refers to the integration of on-premises and cloud-based network resources, enabling organizations to leverage the benefits of both environments. Key components of hybrid networking include:

VPN (Virtual Private Network): A VPN establishes secure, encrypted connections between on-premises and cloud resources, allowing data to be transmitted securely over public networks.

Direct Connect: Direct Connect provides a dedicated, private network connection between on-premises data centers and cloud service providers. This approach offers increased performance, reliability, and security compared to VPN connections.

Conclusion

Networking is a vast concept, and it is essential to have a good understanding of its fundamentals. In this chapter, we have covered both basic and advanced concepts of networking, focusing on cloud-native networking concepts. This knowledge will be beneficial for platform engineers and developers who are responsible for designing and maintaining complex network infrastructures.